Storage Monitoring, Diagnostics and Analytics

Get unified monitoring of 20+ storage device types from one console. Monitor all aspects of SAN storage performance – hardware, physical disks, LUNs, controllers, HBAs. Proactive alerts help resolve issues quickly.

Free TrialStorage Monitoring

With the wide-spread adoption of virtualization and big data, storage has become a key element of IT infrastructures. A slowdown or failure of the storage tier causes key applications to fail and in turn, affects the business.

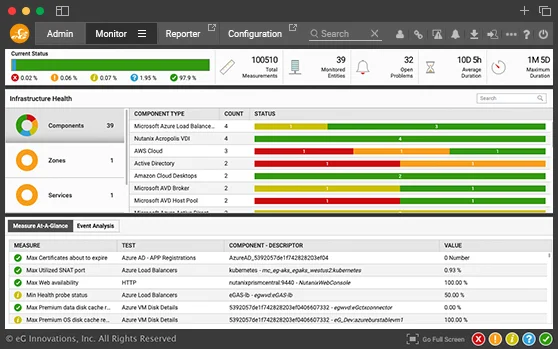

eG Enterprise SAN storage performance monitoring software is a unified monitoring, diagnosis, and reporting solution for your storage infrastructure. From a central web console, administrators can monitor all of their storage devices and can correlate storage performance with the other tiers. This way, they can detect and fix situations when storage is the cause of a performance bottleneck.

Challenges

Many of the tiers of an IT infrastructure depend on storage. Hence, when a slowdown happens administrators have to determine whether the slowdown is due to storage or the other tiers.

Even when a problem is storage-related, further diagnosis is a challenge. Disk failure, bottlenecks in the SAN switches, excessive I/O activity on a specific logical unit (LUN), etc. can cause performance issues. In-memory storage caching adds another layer that has to be considered. To keep the infrastructure performing well, administrators must proactively detect issues, pin-point the cause of the problem, and initiate corrective action.

eG Innovations delivers a robust, reliable and extremely valuable solution to deliver maximum uptime and user satisfaction. Pre-emptive alerting helps us to address performance issues immediately before they affect system and application availability.![]()