IT Infrastructure Monitoring

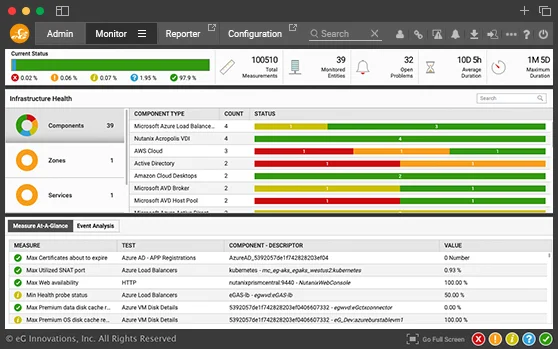

Get insights into every layer, every tier of your IT infrastructure from a central web console. Monitor network, server, storage, cloud, containers and more. AIOps-powered insights make monitoring and diagnosis easy.

Free TrialModern IT infrastructures are heterogeneous and inter-dependent. They involve a number of different tiers that all perform specialized functions: Active Directory for user authentication, firewalls for security, load balancers for scale, virtualization for agility, storage for data retrieval, etc.

Often, each IT infrastructure tier has its own specialized monitoring tool. But administrators lack a single-pane-of-glass view into the IT infrastructure as a whole. The lack of unified IT monitoring solutions often leads to finger-pointing across tiers. Problems take longer to be resolved and troubleshooting is complicated and requires expertise.

eG Enterprise stands out as a leading IT infrastructure solution that simplifies managing your IT environment. By offering comprehensive IT infrastructure monitoring services, eG enables a unified approach to monitoring IT infrastructure and bridges the gap between different IT tiers.

eG Enterprise:

Service Monitoring for

IT Infrastructures

eG Enterprise is an end-to-end IT performance management and monitoring suite for today's heterogeneous, hybrid and scale-out infrastructures. While specialized monitoring tools provide deep-dive visibility into each tier, eG Enterprise is a general practitioner for your IT infrastructure - i.e., it provides the unified console from where administrators can detect and resolve a majority of IT issues.

When it comes to monitoring IT performance, the IT infrastructure management tools you use must be comprehensive, intuitive, and versatile. As a robust infrastructure monitoring system, eG Enterprise equips IT administrators with the necessary tools to efficiently oversee all aspects of their IT landscape. This integrated approach speeds up problem resolution and enhances overall operational efficiency, making it one of the top IT infrastructure solutions for modern, dynamic IT environments.

From a single pane of glass, IT administrators can monitor all aspects of their on-premises and cloud environments - across servers, applications, virtualization, storage, containers, and more.

Whether you're dealing with virtualized systems, cloud services, or traditional on-premises hardware, eG Enterprise's IT infrastructure monitoring capabilities ensure that every component is functioning optimally, keeping your IT backbone strong and reliable.

- Get correlated visibility across your data center and track performance metrics, resource utilization configuration changes, and hardware health

- Intelligent alerts and dynamic baseline thresholds reduce alert noise and accurately pinpoint bottlenecks

- Out-of-the-box reports help with capacity planning and infrastructure right-sizing

- Integration with external ITSM, ChatOps, and workflow management tools makes IT operations more streamlined and efficient

- Use a combination of agent-based and agentless monitoring approaches to meet your custom requirements

eG Enterprise offers unparalleled monitoring coverage for IT infrastructures, supporting 10+ operating systems, 10+ virtualization technologies, 20+ storage device types, and innumerable network devices. Add custom monitoring to eG Enterprise's extendable console.

Why eG Enterprise is an Ideal Fit for Monitoring any Modern IT Infrastructure?

- Deployed in minutes

- Monitor every layer, every tier

- Built-in domain expertise

- Auto-discovery capabilities enable touch-free installation

- Machine learning and auto-baselining enables proactive alerts

- 100s of out-of-the-box reports

- APIs for administration at scale

- Auto-correlation and root-cause

- Automatic

- Universal monitoring technology

- Detailed diagnosis eliminates finger-pointing

- Deployable on-premises or as SaaS

Key Features You'll Unlock

eG Enterprise is an Enterprise-Class Solution, meaning our infrastructure monitoring tool is open, compatible, customizable, scalable, and secure. It's built to integrate seamlessly into existing and future architecture, safeguarding against internal and external threats.

Role-Based Access Control (RBAC):

Cater to different stakeholders in your organization with customizable dashboards and reports. Whether it's IT operations, helpdesk, domain experts, IT architects, or executives, eG Enterprise provides tailored views for each role, enhancing efficiency and focus.

Seamless Integration with Existing Systems and Tools:

eG fits perfectly into your existing setup. Whether it's incident management tools or existing reporting formats, eG Enterprise integrates without hassle, ensuring a smooth transition and operational continuity.

Easy Deployment & Compliance with Security Policies:

Designed with security at its core, eG Enterprise aligns with corporate security and firewall policies. It supports a push architecture and works seamlessly with secure proxy servers to ensure robust protection and easy deployment.

Simple Licensing Policy:

Experience the simplicity and flexibility in licensing. eG Enterprise avoids complicated models, offering straightforward options based on your specific monitoring needs, ensuring cost-effectiveness and ease of scalability.

Customization and Extensibility:

Tailor eG Enterprise to your unique requirements. With open APIs, integrate custom monitoring capabilities seamlessly into your IT environment. It's designed for adaptability, allowing you to extend monitoring beyond out-of-the-box capabilities without complex programming.

Multi-Tenancy Support:

Ideal for complex organizational structures. eG Enterprise offers personalized monitoring views for different departments or regions, ensuring efficiency and autonomy within a unified system.

Broad Platform Support:

No matter the diversity of your IT infrastructure, eG Enterprise supports a wide range of operating systems, virtualization platforms, cloud technologies, and storage technologies, making it a versatile choice for any large organization.

Limited Visibility Operability:

Designed to provide insights even with restricted access across different IT teams. eG Enterprise helps identify potential problem areas across domains, facilitating better communication and quicker resolution of any IT issue.

Scalability and High Availability Configuration Support:

Built to handle the demands of large enterprises, eG Enterprise offers scalability and high availability options, ensuring consistent performance and reliability even in expansive and demanding IT environments.

Server Monitoring made Easy

eG Enterprise supports monitoring any type of server (including rack server, blade server, tower server, etc.) across a wide range of vendors such as HP, Dell, BMC, Cisco, and more.

Monitoring any

Operating System

eG Enterprise is a unified solution for monitoring all server and desktop operating systems:

The Only Virtualization Monitoring Solution

with In/Out Views

eG Enterprise enables you to effectively monitor your multi-hypervisor virtual environment and identify critical bottlenecks that affect performance of business applications - whether in the hypervisor, host server, or guest virtual machine (VM). Using a patented "inside-outside" monitoring technology , eG Enterprise provides performance metrics from two vital perspectives:

One Console for Monitoring all Storage Devices

Easily detect storage bottlenecks in external SAN and NAS arrays that affect application and server performance. eG Enterprise supports monitoring over 20 storage devices including EMC, NetApp, Dell, Hitachi, HPE, and more.

Monitor Network Devices, Flows, Traffic

You can monitor the availability, fault and performance of any SNMP-enabled network device in your network including routers, firewall, switches, gateways, and load balancers from vendors such as Cisco, F5, HPE, Dell, Citrix, etc. Key network performance metrics monitored include:

Our network-aware application visibility provides correlated insight to help administrators easily identify if slow network connectivity is impacting application performance.

Converged Application Performance and Infrastructure Monitoring

Today's business-critical IT infrastructures require solutions that provide ready answers to performance problems. IT infrastructure monitoring only provides part of the answers. Application performance monitoring provides insights if the issues are in the application code. Having different infrastructure and application monitoring solutions necessitates the need for manual oversight and analysis.

eG Enterprise is one of the industry's first converged application performance and infrastructure monitoring solutions.

What Customers Say About Us