Introduction

Docker is an open platform for developing, shipping, and running applications. Docker is designed to deliver your applications faster. With Docker you can separate your applications from your infrastructure and treat your infrastructure like a managed application. Docker helps you ship code faster, test faster, deploy faster, and shorten the cycle between writing code and running code. Docker does this by combining a lightweight container virtualization platform with workflows and tooling that help you manage and deploy your applications.

At its core, Docker provides a way to run almost any application securely isolated in a container. The isolation and security allow you to run many containers simultaneously on your host. The lightweight nature of containers, which run without the extra load of a hypervisor, means you can get more out of your hardware.

Docker has two major components:

- Docker: the open source container virtualization platform.

- Docker Hub: the Software-as-a-Service platform for sharing and managing Docker containers.

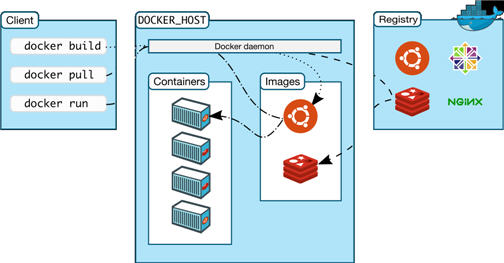

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing the Docker containers. Both the Docker client and the daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate via sockets or through a RESTful API.

Figure 1 : The Docker architecture

The Docker daemon runs on a host machine. The user does not directly interact with the daemon, but instead through the Docker client.

The Docker client, in the form of the docker binary, is the primary user interface to Docker. It accepts commands from the user and communicates back and forth with a Docker daemon.

To understand Docker’s internals, you need to know about three components:

- Docker images: A Docker image is a read-only template. For example, an image could contain an Ubuntu operating system with Apache and your web application installed. Images are used to create Docker containers. Docker provides a simple way to build new images or update existing images, or you can download Docker images that other people have already created. Docker images are the build component of Docker.

- Docker registries: Docker registries hold images. These are public or private stores from which you upload or download images. The public Docker registry is provided with the Docker Hub. It serves a huge collection of existing images for your use. These can be images you create yourself or you can use images that others have previously created. Docker registries are the distribution component of Docker.

- Docker containers: Docker containers are similar to a directory. A Docker container holds everything that is needed for an application to run. Each container is created from a Docker image. Docker containers can be run, started, stopped, moved, and deleted. Each container is an isolated and secure application platform. Docker containers are the run component of Docker.

Due to the lightweight architecture of the docker and fast accessibility of the applications, Docker is gaining a rapid foothold among IT giants. As continuous access to the applications is the key in such environments, even the smallest slip in the performance of the Docker would result in huge losses. To ensure the 24x7 availability of the Docker and high performance rate, administrators need to closely monitor the performance and status of the Docker and its associated components, promptly detect abnormalities, and fix them before end-users notice. This is where eG Enterprise helps administrators.

Note:

eG Enterprise provides monitoring support to Docker on Linux platforms only, and not on Windows.