Monitoring EMC VNX Unified Storage

eG Enterprise offers a specialized Vnx Unified Storage monitoring model that monitors each of the key indicators of the performance of EMC VNX - such as the disks, file systems, volumes, DAEs, LUNs, etc. - and proactively alerts administrators to potential performance bottlenecks, so that administrators can resolve the issues well before end-users complain.

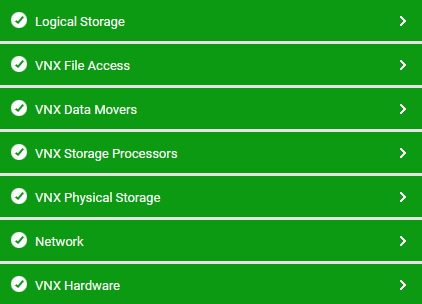

Figure 1 : The layer model of EMC VNX Unified Storage

Each layer of Figure 1.2 above is mapped to a variety of tests, each of which report a wealth of performance information related to the VNX unified storage. Using these metrics, administrators can find quick and accurate answers to the following performance queries:

- Is the VNX storage system available over the network?

- How responsive is VNX to requests over the network?

- Are all the hardware components of the VNX storage system up and running? If not, which hardware component is unavailable - is it the fan? the power supply unit? or the LCC?

- Is the VNX storage system using network bandwidth optimally? If not, which NIC on VNX is consuming bandwidth excessively?

- Is any disk too busy? If so, which one is it?

- Which disk is too slow in processing I/O requests? What type of I/O requests does it process very slowly - read or write requests?

- Has any disk failed?

- Is any disk consuming too much bandwidth? If so, which one is it?

- Which disk is running out of disk space?

- Are the read and write storage processor (SP) caches used optimally? Which storage processor's cache may require right-sizing, and which cache is it - read or write?

- Which SP port is down currently?

- Is the SFP (small form-factor pluggable module) of any SP port faulted?

- Is any SP over-utilized?

- Is any SP idle?

- How are the data movers using their caches? Which cache's usage is most ineffective ineffective - Directory name lookup cache, Open file cache, or kernel buffer cache?

- Which data mover has too many blocked threads?

- Which data mover is experiencing a CPU and/or RAM contention?

- Is the statmon service on any data mover not running currently?

- Which data mover is processing the CIFS read/write requests to it very slowly?

- Which data mover is processing the NFS read/write requests to it very slowly?

- Which file system on which data mover is using too much storage space?

- Are too many I/O requests in queue for any LUN? If so, which LUN is it?

- Which LUN is experiencing too many errors? What type of errors are these - hard or soft?

- Is any LUN making poor use of its read/write cache?

- Which disk volume is running out of space?

- Which disk volume has too many pending I/O requests?

- Which meta volume is experiencing a processing bottleneck?