Monitoring the RHEV Manager

eG Enterprise provides a 100%, web-based RHEV Manager monitoring model, which periodically runs availability and health checks on the RHEV manager and proactively reports abnormalities.

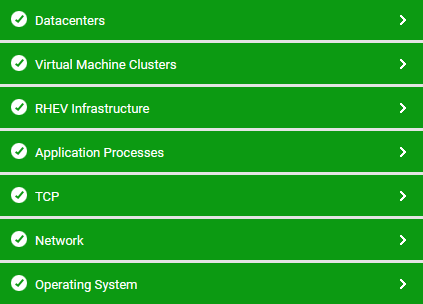

Figure 1 : The layer model of the RHEV Manager

Each layer depicted by above figure is mapped to tests, which employ agent-based or agentless mechanisms (depending upon how you want the RHEV manager to be monitored by the eG Enterprise system) to pull out a variety of metrics from the RHEV manager. The metrics so collected enable administrators to quickly find accurate answers to the following performance queries:

- Is the RHEV manager available over the network? If so, how quickly is it responding to requests?

- Have any error/warning events occurred on the RHEV manager? What are these errors/warnings?

- Has the RHEV manager log captured any new errors/warnings? If so, what are they?

- How many data centers have been configured on the RHEV manager? What are they, and what is the compatibility level of each one of them?

- Is any data center in a problematic state currently?

- Which data center is running short of disk space? How many clusters, RHEV servers, and VMs have been configured in that data center, and which ones are they?

- How many storage domains are operational in each datacenter? Which ones are they?

- Is any storage domain unavailable? If so, which one? Which VMs are using this storage domain?

- Is any storage domain running out of space? Which one is it, and which VMs will be impacted by this space crunch?

- Is any logical network currently down? Which clusters and RHEV servers are using this logical network?

- Which logical network is experiencing heavy network traffic?

- Have any errors occurred on a logical network? If so, which one is it, and when did these errors occur - while transmitting data or while receiving it?

- Is any cluster using CPU resources excessively? If so, which cluster is it? Are any CPU-hungry VMs operating within that cluster? What are they?

- Are all clusters rightly sized in terms of memory, or are there any clusters that are currently experiencing a memory contention? If so, which cluster is it, and what is causing the memory crunch on that cluster - is it owing to improperly sized hosts or memory-starved VMs?

- Which cluster has too many hosts and VMs that are powered off?

- What is the compatibility level of each cluster?