Scheduled Database Maintenance Activity for Microsoft SQL Server

-

Rebuilding Indexes to reduce/eliminate fragmentation

Login as the eG install user, and execute the ALTER INDEX statement to rebuild the indexes on all tables in the current context.

However, note that this statement can be run in the ONLINE mode only in the Enterprise Editions of the SQL server. The table below provides the syntax for this SQL script on various versions of the MS SQL server:

SQL Server Version Rebuild indexes with State of the eG manager while re-building indexes SQL Procedure Default fill factor Specific fill factor Online Offline MS SQL 2012 / 2014 / 2016 / 2017 / 2019 / 2022 / 2025

select 'ALTER INDEX ALL ON ['+schema_name(schema_id)+'].['+name+'] REBUILD WITH (ONLINE=ON)' from sys.objects where type='U';

Sample Output of the above SQL Script:

ALTER INDEX ALL ON [eguser].[UserLicenseReport]

REBUILD WITH (ONLINE=ON)

select 'ALTER INDEX ALL ON ['+schema_name(schema_id)+'].['+name+'] REBUILD WITH (ONLINE=OFF)' from sys.objects where type='U';

Sample Output of the above SQL Script:

ALTER INDEX ALL ON [eguser].[UserLicenseReport]

REBUILD WITH (ONLINE=OFF)

select 'ALTER INDEX ALL ON ['+schema_name(schema_id)+'].['+name+'] REBUILD WITH (FILLFACTOR=<FILL_FACTOR_PERCENT>,ONLINE=ON)' from sys.objects where type='U';

Sample Output of the above SQL Script:

ALTER INDEX ALL ON [eguser].[CUSTOMDASHBOARDTEMPLATES]

REBUILD WITH (FILLFACTOR=80,ONLINE=ON)

select 'ALTER INDEX ALL ON['+schema_name(schema_id)+'].['+name+'] REBUILD WITH (FILLFACTOR=<FILL_FACTOR_PERCENT>,ONLINE=OFF)' from sys.objects where type='U';

Sample Output of the above SQL Script:

ALTER INDEX ALL ON [eguser].[CUSTOMDASHBOARDTEMPLATES]

REBUILD WITH (FILLFACTOR=80,ONLINE=OFF)

MS SQL database on Azure

NA NA select 'ALTER INDEX ALL ON [' + schema_name ( schema_id )+ '].[' + name + '] REBUILD WITH (ONLINE= ON)' from sys . objects where type = 'U' ;

Sample Output of the above SQL Script:

ALTER INDEX ALL ON [eguser].[UserLicenseReport]

REBUILD WITH (ONLINE=ON)

select 'ALTER INDEX ALL ON [' + schema_name (schema_id )+ '].[' + name + '] REBUILD WITH(ONLINE= OFF)' from sys . objects where type = 'U' ;

Sample Output of the above SQL Script:

ALTER INDEX ALL ON [eguser].[UserLicenseReport]

REBUILD WITH (ONLINE=OFF)

You can execute these queries in batches, so as to save time in index rebuilding and to have greater control over the rebuilding process. For instance, you can copy the first five queries to the SQL Management Studio and execute them simultaneously. After those queries complete execution, you can copy five more queries and execute them at one go. This way, you can quickly and easily rebuild indexes.

-

Truncating the transaction log file:

Transaction logs can grow to a very large size and clog your disks, and can hence slow down writes into the disks and make them more resource intensive.

First, to help ensure that there is no potential for any data loss we need to determine if the space being used by the transaction log, is being used by data or if it is a free/white-space being held in the transaction log.

One way to check is by highlighting the database in the Enterprise Manager, selecting the View->TaskPad option and looking at the amount of space free/in use within the Transaction Log device.

If a large amount of space is being consumed by data, then you should perform a transaction log backup, truncate the log and then immediately perform a full SQL backup to help preserve data. If you cannot perform the transaction log backup due to the lack of available disk space, then truncate the log (ex: backup log <dbname> with truncate_only). Immediately after performing this step, you must perform a full SQL database backup in order to ensure the recoverability of your database. You should then realize that the remaining space in your transaction log is now free space that should be released back to the OS. To perform this, execute a DBCC SHRINKFILE (<TranLogLogicalName>,<minsize>) where the TranLogLogicalName is the logical name of the transaction log device for that database and the minsize is the least size that you would like to set the transaction log to (in MB).

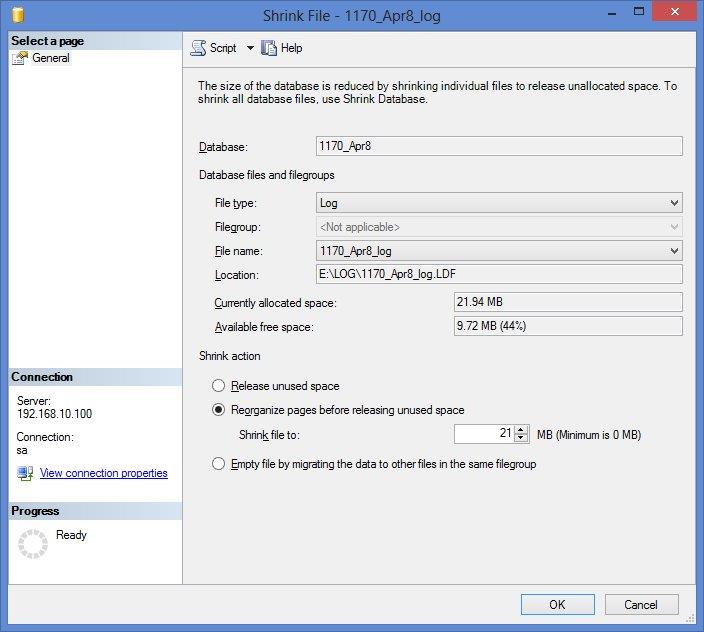

The command to use to shrink the log file is DBCC SHRINKFILE (1170_Apr8_log, 21), where 1170_Apr8_log is the logical name of the log that can be found in the Transaction Log page of the Shrink File dialog box against the field name, File Name (see Figure 1 below), and 21 is the desired size (in MB) to which the database should be shrunk to.

Figure 1 : Truncating the transaction log

You should then make sure that the database has ongoing transaction log backups that occur on a repeat frequency or whenever the size grows quite big.

-

Compressing the data:

In a database, if the data corresponding to a particular object is not compressed by default, then, the disk space consumed may be too high. To reduce the consumption of disk space and also to reduce the I/O on the database, it is necessary to compress the data. Data can be compressed either row-wise or page-wise.

Note:

Data compression is a CPU-intensive process. Therefore, it is recommended to carry out the data compression process while the state of the eG manager is OFFLINE.

The below table helps you to generate index/table rebuilds with compressed data.

SQL Server Version State of the eG manager while re-building indexes/tables with compressed data SQL Procedure Online Offline MS SQL 2016 / 2017 / 2019 / 2022

To compress a table at the ROW-level:

select ‘ALTER TABLE '+ name +' REBUILD WITH (DATA_COMPRESSION=ROW);' from sys.objects where type='U';

Sample Output of the above SQL Script:

ALTER TABLE MsSQLDatabaseTest_TREND REBUILD WITH (DATA_COMPRESSION=ROW);

To compress a table at the PAGE-level:

select ‘ALTER TABLE '+ name +' REBUILD WITH (DATA_COMPRESSION=PAGE);' from sys.objects where type='U';

Sample Output of the above SQL Script:

ALTER TABLE MsSQLDatabaseTest_TREND REBUILD WITH (DATA_COMPRESSION=PAGE);

To compress an index at the PAGE-level:

select 'alter index '+ i.name +' on '+ o.name +' rebuild with (DATA_COMPRESSION=PAGE);' from sys.indexes i inner join sys.objects o on o.type='U' and o.object_id=i.object_id and i.name is not null;

Sample Output of the above SQL Script:

alter index PK_RUMBrowserTest_TREND on RUMBrowserTest_TREND rebuild with (DATA_COMPRESSION=PAGE);

To compress an index at the ROW-level:

select 'alter index '+ i.name +' on '+ o.name +' rebuild with (DATA_COMPRESSION=ROW);' from sys.indexes i inner join sys.objects o on o.type='U' and o.object_id=i.object_id and i.name is not null;

Sample Output of the above SQL Script:

alter index PK_RUMBrowserTest_TREND on RUMBrowserTest_TREND rebuild with (DATA_COMPRESSION=ROW);

Note:

- If the PK index is compressed (at the PAGE- or ROW-level), then the corresponding table will also be compressed at the same level.

- If the PK index is compressed (at the PAGE- or ROW-level), then make sure you compress/rebuild the IDX index at the same level.

You can execute these queries in batches, so as to save time in data compression and to have greater control over the compression process. For instance, you can copy the first five queries to the SQL Management Studio and execute them simultaneously. After those queries complete execution, you can copy five more queries and execute them at one go. This way, you can quickly and easily compress data.