Evaluating a monitoring tool can be both time-consuming and frustrating. There are hundreds of products available, and each tends to serve a particular niche, so all of them seem to have some degree of value.

Evaluating a monitoring tool can be both time-consuming and frustrating. There are hundreds of products available, and each tends to serve a particular niche, so all of them seem to have some degree of value.

But in most cases the return-on-investment in any performance monitoring product lies in making sense of events and providing actionable information, with the least amount of effort. So, when you hear claims of “root-cause analysis” or “automated analytics” it’s time to trust but verify.

Depending on the scope of what you’re looking to monitor and the suppliers’ deployment model (i.e., cloud or on-premises), you may be able to easily tailor an evaluation copy to instrument a test-bed in a pre-production or staging environment.

This allows you to get hands on the solution, exercise it within an environment that closely mirrors production and understand the degree to which the monitoring solution will proactively avoid performance issues and/or how quickly it will isolate them when they do occur. It also should give you some idea of what’s involved in maintaining the accuracy of the analytics over time, and whether the monitor can be exploited in other areas (and how).

For highly targeted IT services such as Citrix, a simple checklist of requirements may be the only validation you need to test the end-to-end requirements of that specific ecosystem. However, sometimes there are more complex validation requirements that encompass multiple ecosystems, and this may justify a formal proof-of-concept (POC).

The most effective POCs are treated as a milestone in an implementation, even if a formal selection and purchase has not yet been executed. The POC is not the best time to engage stakeholders in evaluations and demonstrations – POCs should be performed at the end of the vendor selection process.

In addition, POCs are not typically in production environments and do not represent the entire scope of the proposed solution. A well run POC will have a clear charter that defines the stakeholders involved, objectives, scope and requirements. The outputs of the POC can include design documents, results of tests and analysis of product capabilities, communication and training plans, as well as architecture and interface requirements. A POC can be performed pre- or post-sale as well, depending on requirements.

A Production Pilot can be performed instead of a POC for smaller or simpler deployments, or can follow a POC for larger and/or more complex requirements, and as the name implies, is performed in a subset of the production environment.

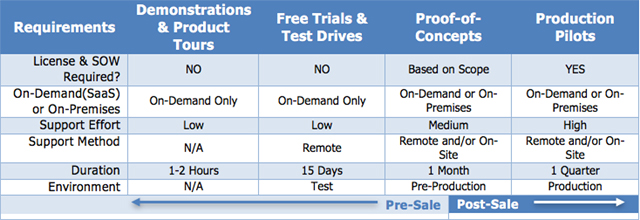

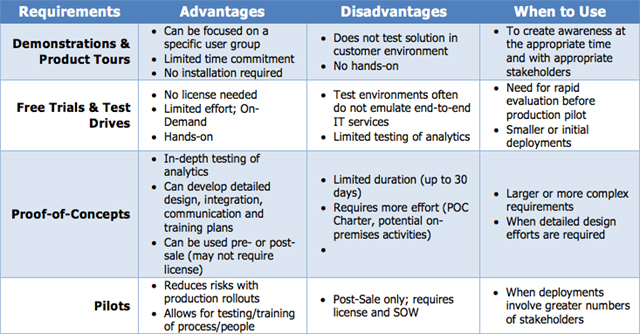

The tables below provide a few suggestions on when and how you can use various techniques to “trust but verify” a monitoring solution.

Contact your eG Innovations representative to learn more »

eG Enterprise is an Observability solution for Modern IT. Monitor digital workspaces,

web applications, SaaS services, cloud and containers from a single pane of glass.