Azure Load Balancers Test

An Azure load balancer is a Layer-4 (TCP, UDP) load balancer that provides high availability by distributing incoming traffic among healthy VMs. A load balancer health probe monitors a given port on each VM and only distributes traffic to an operational VM.

This test helps the administrators detect, diagnose, and resolve performance deficiencies related to the data traffic in the environment. This test also reports the health probe status of the applications since the Load Balancer improves application uptime by probing the health of application instances, automatically taking unhealthy instances out of rotation, and reinstating them when they become healthy again. Detailed diagnostics provide additional problem insights to administrators, thereby easing troubleshooting.

Target of the Test: A Microsoft Azure Load Balancer

Agent deploying the test: An external agent

Output of the test: One set of results for each load balancer to the target Microsoft Azure Load Balancers.

| Parameters | Description |

|---|---|

|

Test Period |

How often should the test be executed. |

|

Host |

The host for which the test is to be configured. |

|

Subscription ID |

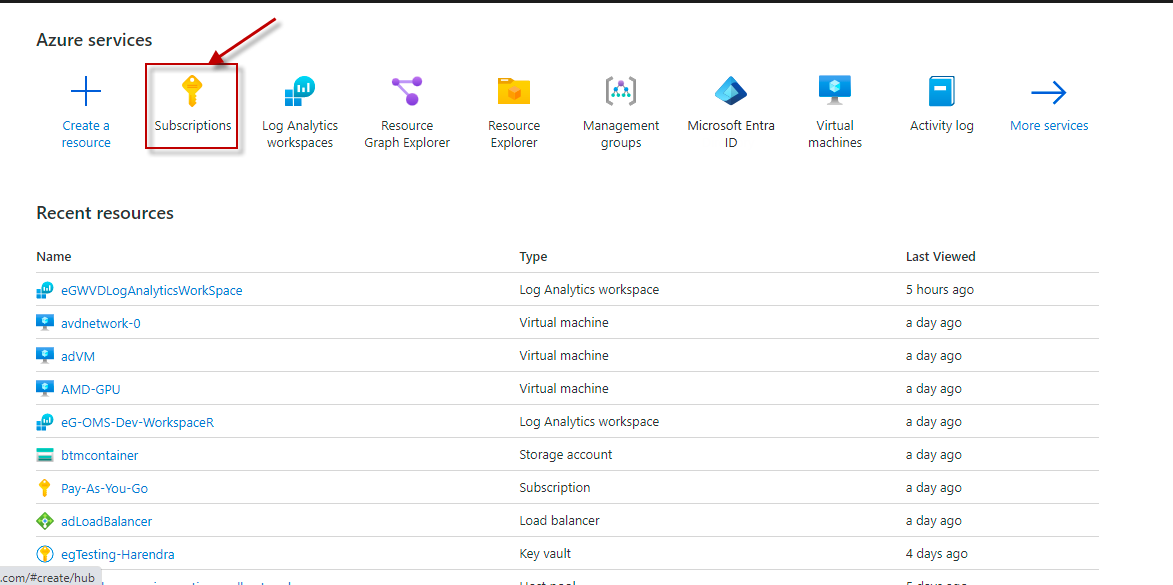

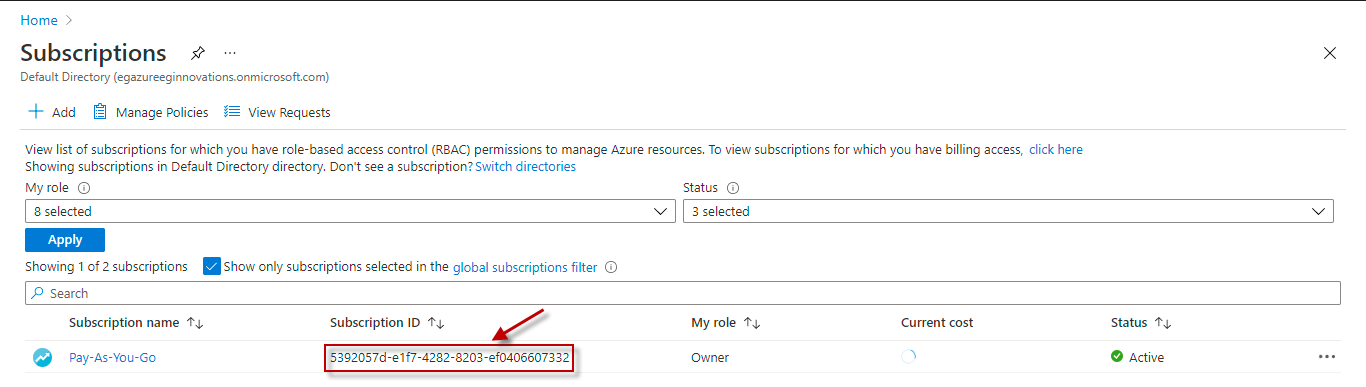

Specify the GUID which uniquely identifies the Microsoft Azure Subscription to be monitored. To know the ID that maps to the target subscription, do the following:

|

|

Tenant ID |

Specify the Directory ID of the Microsoft Entra tenant to which the target subscription belongs. To know how to determine the Directory ID, refer to Configuring the eG Agent to Monitor Microsoft Load Balancers Using Azure ARM REST API. |

|

Client ID, Client Password, and Confirm Password |

To connect to the target subscription, the eG agent requires an Access token in the form of an Application ID and the client secret value. For this purpose, you should register a new application with the Microsoft Entra tenant. To know how to create such an application and determine its Application ID and client secret, refer to Configuring the eG Agent to Monitor Microsoft Load Balancers Using Azure ARM REST API. Specify the Application ID of the created Application in the Client ID text box and the client secret value in the Client Password text box. Confirm the Client Password by retyping it in the Confirm Password text box. |

|

Proxy Host |

In some environments, all communication with the Azure cloud could be routed through a proxy server. In such environments, you should make sure that the eG agent connects to the cloud via the proxy server and collects metrics. To enable metrics collection via a proxy, specify the IP address of the proxy server and the port at which the server listens against the Proxy Host and Proxy Port parameters. By default, these parameters are set to none, indicating that the eG agent is not configured to communicate via a proxy, by default. |

|

Proxy Username, Proxy Password and Confirm Password |

If the proxy server requires authentication, then, specify a valid proxy user name and password in the Proxy Username and Proxy Password parameters, respectively. Then, confirm the password by retyping it in the Confirm Password text box. |

|

Resource Group |

A resource group is a container that holds related resources for an Azure solution. The resource group can include all the resources for the solution, or only those resources that you want to manage as a group. Specify the name of the particular Resource Group which is a part of the Load Balancer to be managed in the Resource Group text box. |

|

Load Balancer |

Load balancers improve application availability and responsiveness and prevent server overload. Each load balancer sits between client devices and backend servers, receiving and then distributing incoming requests to any available server capable of fulfilling them. Specify the name of the respective Load Balancer in the load balancer text box. |

|

DD Frequency |

Refers to the frequency with which detailed diagnosis measures are to be generated for this test. The default is 1:1. This indicates that, by default, detailed measures will be generated every time this test runs, and also every time the test detects a problem. You can modify this frequency, if you so desire. Also, if you intend to disable the detailed diagnosis capability for this test, you can do so by specifying none against DD frequency. |

|

Detailed Diagnosis |

To make diagnosis more efficient and accurate, the eG Enterprise embeds an optional detailed diagnostic capability. With this capability, the eG agents can be configured to run detailed, more elaborate tests as and when specific problems are detected. To enable the detailed diagnosis capability of this test for a particular server, choose the On option. To disable the capability, click on the Off option. The option to selectively enable/disable the detailed diagnosis capability will be available only if the following conditions are fulfilled:

|

| Measurement | Description | Measurement Unit | Interpretation | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

Data path availability |

Indicates the data path availability from within a region to the load balancer front end. |

Percent |

A standard load balancer continuously uses the data path from within a region to the load balancer frontend, to the network that supports your VM. As long as healthy instances remain, the measurement follows the same path as your application's load-balanced traffic. The data path in use is validated. The measurement is invisible to your application and doesn’t interfere with other operations. A value of 100% indicates that the data path is very stable for the load balancer operations. A value of 0% indicates that the data path is unstable. |

||||||||||

|

Health probe status |

Indicates the health-probing status that monitors the application endpoint's health |

Percent |

A standard load balancer uses a distributed health-probing service that monitors your application endpoint's health according to your configuration settings. This metric provides an aggregate or per-endpoint filtered view of each instance endpoint in the load balancer pool. You can see how load balancer views the health of your application, as indicated by your health probe configuration. A very high value is desired for this measure. |

||||||||||

|

Data transmitted |

Indicates the rate at which the data is transmitted. |

MB |

A standard load balancer reports the data processed per front end. You may notice that the bytes aren’t distributed equally across the backend instances. This is expected as the Azure Load Balancer algorithm is based on flows. A high value for this measure indicates that the data transmission is high. |

||||||||||

|

Packet count |

Indicates the number of data packets processed per front end.

|

Number |

A standard load balancer reports the packets processed per front end. |

||||||||||

|

SYN count |

Indicates the number of TCP SYN packets that were received. |

Number |

A standard load balancer doesn’t terminate Transmission Control Protocol (TCP) connections or interact with TCP or User Data-gram Packet (UDP) flows. Flows and their handshakes are always between the source and the VM instance. To better troubleshoot your TCP protocol scenarios, you can make use of SYN packets counters to understand how many TCP connection attempts are made. The metric reports the number of TCP SYN packets that were received. |

||||||||||

|

SNAT connection count |

Indicates the count of SNAT connections through which the application rely on SNAT for outbound originated flows. |

Number |

A standard load balancer reports the number of outbound flows that are masqueraded to the Public IP address frontend. SNAT ports are an exhaustible resource. This metric can give an indication of how heavily your application is relying on SNAT for outbound originated flows. Counters for successful and failed outbound SNAT flows are reported. The counters can be used to troubleshoot and understand the health of your outbound flows. |

||||||||||

|

Allocated SNAT ports |

Indicates the number of SNAT ports allocated per backend instance. |

Number |

|||||||||||

|

Used SNAT ports |

Indicates the number of SNAT ports that are utilized per backend instance. |

Number |

|||||||||||

|

Utilized SNAT port |

Indicates the percentage of SNAT ports that are utilized per backend instance. |

Percent |

|

||||||||||

|

Provisioning state |

Indicates the current Provisioning state of Load Balancer. |

|

The values reported by this measure and its numeric equivalents are mentioned in the table below:

Note: By default, this measure reports the Measure Values listed in the table above to indicate the current Provisioning state of Load Balancer. |

||||||||||

|

Number of frontend IP configurations |

Indicates the number of frontend IP configurations. |

Number |

The IP address of the Azure Load Balancer. It's the point of contact for clients. These IP addresses can be either public IP Address or private IP Address. Use the detailed diagnosis of this measure to know the Name, IP address, Rules count and Rules (Name:Type). |

||||||||||

|

Number of backend pools |

Indicates the number of backend pools. |

Number |

The group of virtual machines or instances in a virtual machine scale set that is serving the incoming request. Use the detailed diagnosis of this measure to know the Backend pool, Resource name:Resource status:IP address:Network interface:Availability zone, Rules count and Rules (Name:Type). |

||||||||||

|

Number of health probes |

Indicates the number of health probes. |

Number |

A health probe is used to determine the health status of the instances in the backend pool. This health probe will determine if an instance is healthy and can receive traffic. Use the detailed diagnosis of this measure to know the Name, Protocol, Port, Path, Used by and Rules (Name:Type). |

||||||||||

|

Number of load balancing rules |

Indicates the number of load balancing rules |

Number |

A load balancer rule is used to define how incoming traffic is distributed to all the instances within the backend pool. A load-balancing rule maps a given frontend IP configuration and port to multiple backend IP addresses and ports. Load Balancer rules are for inbound traffic only. Use the detailed diagnosis of this measure to know the Name, Load balancing rule, Backend pool and Health probe. |

||||||||||

|

Number of inbound NAT rules |

Indicates the number of inbound NAT rules |

Number |

An inbound NAT rule forwards incoming traffic sent to frontend IP address and port combination. The traffic is sent to a specific virtual machine or instance in the backend pool. Port forwarding is done by the same hash-based distribution as load balancing. Use the detailed diagnosis of this measure to know the Name, Frontend IP, Frontend port and Service. |

||||||||||

|

Number of outbound rules |

Indicates the number of outbound rules. |

Number |

An outbound rule configures outbound Network Address Translation (NAT) for all virtual machines or instances identified by the backend pool. This rule enables instances in the backend to communicate (outbound) to the internet or other endpoints. |