Azure Storage Details Test

Azure Storage is a Microsoft-managed cloud service that provides storage that is highly available, secure, durable, scalable and redundant.

An Azure storage account is an access point to all the elements that compose the Azure storage realm. Once the user creates the storage account, they can select the level of resilience needed and Azure will take care of the rest. A single storage account can store up to 500TB of data.

With an Azure storage account, you have access to the following types of storage:

-

Azure Blob Storage: Blob Storage is Microsoft Azure’s service for storing binary large objects or blobs which are typically composed of unstructured data such as text, images, and videos, along with their metadata.

-

Azure Table Storage: Azure Table Storage is a scalable, NoSQL, key-value data storage system that can be used to store large amounts of data in the cloud. This storage offering has a schemaless design, and each table has rows that are composed of key-value pairs.

-

Azure Queue Storage: Azure Queue Storage is a service that allows users to store high volumes of messages, process them asynchronously and consume them when needed while keeping costs down by leveraging a pay-per-use pricing model.

-

Azure File Storage: Azure Files is a shared network file storage service that provides administrators a way to access native SMB file shares in the cloud. The Azure File service provides a way for applications running on cloud VMs to share files among them by using standard protocols like WriteFile or ReadFile.

Also, by configuring geo-redundant storage accounts, you can have data in your primary storage accounts copied to a second region. This way, if the primary region is unavailable, you can initiate a fail over to the secondary region, thereby ensuring the uninterrupted delivery of storage services.

In summary, what makes Azure Storage the most coveted storage platform is its large storage capacity, reliability, versatility (ability to handle heterogeneous storage types), and cost effectiveness. Additionally, because Azure storage eases cloud application development, it has found favor with cloud application developers as well. This means that any issue that undermines the performance of a storage account can result in unexpected application outages / poor application performance. Such issues can include, the reduced availability of the Azure storage service, the lack of adequate storage space in an account, poor responsiveness of an account to requests, etc. If such applications front-end your critical business services, then service quality will suffer, causing SLA violations, loss of revenue, and a rise in penalities. To avoid this, it is imperative that administrators monitor the availability, usage, and processing ability of each Azure storage account that is configured for a target subscription, quickly capture abnormalities, and rapidly initiate measures to right the wrongs before application performance degrades. This is where the Azure Storage Details test helps!

This test automatically discovers the Azure storage accounts configured for the monitored Azure subscription. For each discovered account, the test then reports the provisioning state of that account. Accounts that are in an Error state are highlighted in the process. The test also evaluates how resilient each storage account is by monitoring the availability of the primary and secondary locations; alerts are sent out if both locations are unavailable. The test also measures how quickly/otherwise each storage account processes requests, thus leading you to the latent accounts. Furthermore, the test provides real-time insights into usage and processing ability of each storage type. In the process, when unusual space usage and abnormal slowness is observed in an account, administrators will be able to accurately tell which type of storage is at the root of the problem - blob? table? file? or queue?

This way, the test not only leads administrators to the problem areas of a storage account, it also reveals the probable cause of these problems, thereby enabling administrators to act quickly and eliminate the issues.

Target of the Test: A Microsoft Azure Subscription

Agent deploying the test: A remote agent

Output of the test: One set of results for each Azure Storage account configured for every resource group in the target Azure Subscription

| Parameters | Description |

|---|---|

|

Test Period |

How often should the test be executed. |

|

Host |

The host for which the test is to be configured. |

|

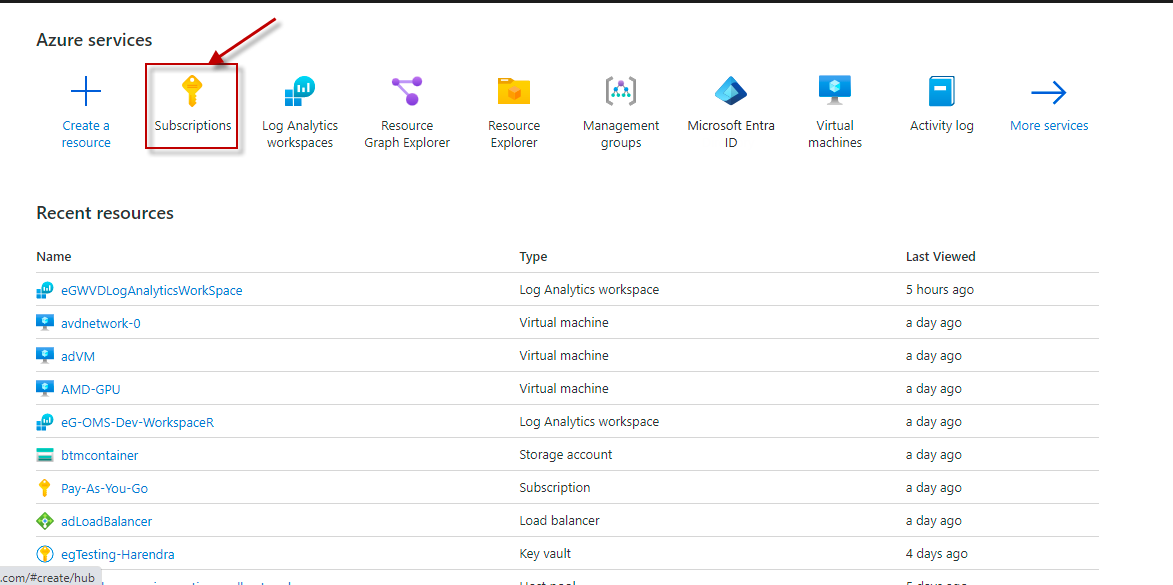

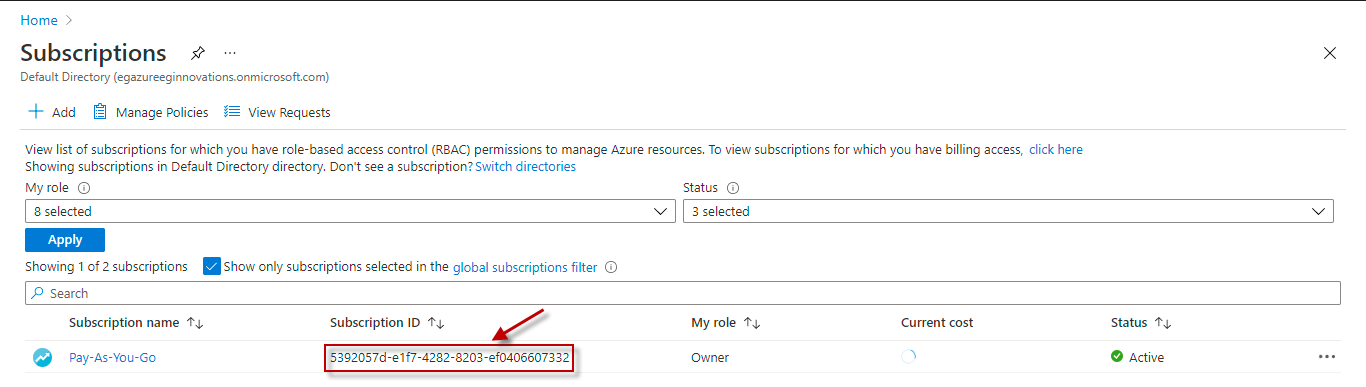

Subscription ID |

This field will be automatically populated if you have chosen to automatically fulfill the pre-requisites for monitoring the Microsoft Azure Subscription. Specify the GUID which uniquely identifies the Microsoft Azure Subscription to be monitored in this text box

|

|

Tenant ID |

This field will be automatically populated if you have chosen to automatically fulfill the pre-requisites for monitoring the Microsoft Azure Subscription. Specify the Directory ID of the Azure Entra ID tenant to which the target subscription belongs in this text box |

|

Client ID, Client Password, and Confirm Password |

To connect to the target subscription, the eG agent requires an Access token in the form of an Application ID and the client secret value. For this purpose, you should register a new application with the Microsoft Entra tenant. To know how to create such an application and determine its Application ID and client secret, refer to Configuring the eG Agent to Monitor a Microsoft Azure Subscription Using Azure ARM REST API. Specify the Application ID of the created Application in the Client ID text box and the client secret value in the Client Password text box |

|

Proxy Host and Proxy Port |

In some environments, all communication with the Azure cloud be routed through a proxy server. In such environments, you should make sure that the eG agent connects to the cloud via the proxy server and collects metrics. To enable metrics collection via a proxy, specify the IP address of the proxy server and the port at which the server listens against the Proxy Host and Proxy Port parameters. By default, these parameters are set to none, indicating that the eG agent is not configured to communicate via a proxy, by default. |

|

Proxy Username, Proxy Password and Confirm Password |

If the proxy server requires authentication, then, specify a valid proxy user name and password in the Proxy Username and Proxy Password parameters, respectively. Then, confirm the password by retyping it in the Confirm Password text box. |

|

Detailed Diagnosis |

To make diagnosis more efficient and accurate, the eG Enterprise embeds an optional detailed diagnostic capability. With this capability, the eG agents can be configured to run detailed, more elaborate tests as and when specific problems are detected. To enable the detailed diagnosis capability of this test for a particular server, choose the On option. To disable the capability, click on the Off option. The option to selectively enable/disable the detailed diagnosis capability will be available only if the following conditions are fulfilled:

|

| Measurement | Description | Measurement Unit | Interpretation | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

Provisioning state |

Indicates the current provisioning status of this account. |

|

The values reported by this measure and its numeric equivalents are mentioned in the table below:

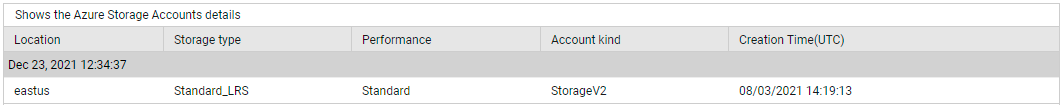

Note: By default, this measure reports the Measure Values listed in the table above to indicate the current provisioning status of a storage account. In the graph of this measure however, the same is represented using the numeric equivalents only. Use the detailed diagnosis of this measure to know the location of the storage, the storage type, when it was created, and more. |

||||||||||

|

Disk primary state |

Indicates whether/not the primary location of this storage account is available. . |

|

The values reported by this measure and its numeric equivalents are mentioned in the table below:

Note: By default, this measure reports the Measure Values listed in the table above to indicate whether/not the storage account is available in its primary location. In the graph of this measure however, the same is represented using the numeric equivalents only. If the value of this measure is Unavailable for any storage account, it is a cue to administrators to initiate a fail over to the secondary location. |

||||||||||

|

Disk secondary state |

Indicates whether/not the secondary location of this storage account is available. |

|

The values reported by this measure and its numeric equivalents are mentioned in the table below:

Note: By default, this measure reports the Measure Values listed in the table above to indicate whether/not the secondary location of the storage account is available. In the graph of this measure however, the same is represented using the numeric equivalents only. If both the Primary status and Secondary status measures report the value Unavailable for a storage account, it is worrisome. This is because, it implies that all copies of the storage account are inaccessible to applications. This can bring application operations to a stand-still! |

||||||||||

|

Storage account used capacity |

Indicates the amount of storage space in this account that is in use currently. |

MB |

Compare the value of this measure across accounts to identify the resource-hungry storage accounts. Make sure that such accounts are sized with sufficient storage space to fulfill the space requirements of dependent applications. If a storage account is found to be space-hungry, then compare the value of the Used file capacity, Used blob capacity, Used table capacity, Used queue capacity, and the Amount of storage used by snapshotsmeasures of that storage account, to know what type of storage is hogging the storage space and is contributing to the abnormal usage. |

||||||||||

|

Used file capacity |

Indicates the amount of storage space in this account that is used by file storage. |

MB |

If the Storage account used capacity measure reports an unusually high value for an account, then compare the value of this measure with that of the Used blob capacity, Used table capacity, Used queue capacity, and the Amount of storage used by snapshots measures to accurately identify the type of storage that is responsible for the abnormal usage - is it file storage? blob storage? table storage? queue storage? or snapshots? |

||||||||||

|

Used blob capacity |

Indicates the amount of storage space in this account that is used by blob storage. |

MB |

If the Storage account used capacity measure reports an unusually high value for an account, then compare the value of this measure with that of the Used file capacity, Used table capacity, Used queue capacity , and the Amount of storage used by snapshotsmeasures to accurately identify the type of storage that is responsible for the abnormal usage - is it file storage? blob storage? table storage? queue storage? or snapshots? |

||||||||||

|

Used table capacity |

Indicates the amount of storage space in this account that is used by table storage. |

MB |

If the Storage account used capacity measure reports an unusually high value for an account, then compare the value of this measure with that of the Used blob capacity, Used file capacity, Used queue capacity , and the Amount of storage used by snapshotsmeasures to accurately identify the type of storage that is responsible for the abnormal usage - is it file storage? blob storage? table storage? or queue storage? or snapshots? |

||||||||||

|

Used queue capacity |

Indicates the amount of storage space in this account that is used by queue storage. |

MB |

If the Storage account used capacity measure reports an unusually high value for an account, then compare the value of this measure with that of the Used blob capacity, Used table capacity, Used file capacity and the Amount of storage used by snapshotsmeasures to accurately identify the type of storage that is responsible for the abnormal usage - is it file storage? blob storage? table storage? queue storage? or snapshots? |

||||||||||

|

Total requests in storage account |

Indicates the number of requests made to this storage account across all storage types. |

Number |

|

||||||||||

|

Total Ingress in storage account |

Indicates the amount of ingress data consumed by this storage account across all storage types. |

MB |

A default ingress limit is set for different types of storage accounts. The ingress limit refers to the maximum amount of data that a storage type can receive. If the value of this measure is unusually high for any storage account, then compare the value of the File share ingress, Blob ingress, Table ingress, and Queue ingress measures to know which storage type is receiving the maximum amount of data. |

||||||||||

|

Total egress in storage account |

Indicates the amount of egress data consumed by this storage account across all storage types. |

MB |

A default egress limit is set for different types of storage accounts. The egress limit refers to the maximum amount of data that a storage type can send. If the value of this measure is unusually high for any storage account, then compare the value of the File share egress, Blob egress, Table egress, and Queue egress measures to know which storage type is sending the maximum amount of data. |

||||||||||

|

Success server total latency |

Indicates the average time taken by the storage types in this storage account to process requests successfully. |

Secs |

Server latency is the interval from when an Azure storage account receives the last packet of the request until the first packet of the response is returned from that account. In simpler terms, it means the time taken by the Azure storage account to process any given request. End-to-end latency is the interval from when the Azure storage account receives the first packet of the request until that storage account receives a client acknowledgment on the last packet of the response. In simpler terms it means the round trip of any operation starting at the client application, plus the time taken for processing the request at the storage account and then coming back to the client application. If you find that end-to-end latency is significantly higher than server latency, then investigate and address the source of the additional latency. The main factor influencing end-to-end latency is operation size. It takes longer to complete larger operations, due to the amount of data being transferred over the network and processed by Azure Storage. Client configuration factors such as concurrency and threading also affect latency. Client resources including CPU, memory, local storage, and network interfaces can also affect latency. If the server latency is abnormally high, then it could be because the storage sub-system is experiencing serious processing deficiencies. In this case, you may want to know which specific storage type is unable to service requests quickly - is it blob storage? table storage? file storage? or queue storage? For this, compare the value of the File share success server latency, Blob success server latency, Table success server latency, and Queue success server latency measures. |

||||||||||

|

Success end-to-end total latency |

Indicates that average end-to-end latency of successful requests made to this storage account. |

Secs |

|||||||||||

|

Storage account service availability |

Indicates whether/not this storage account is available currently. |

Percent |

Ideally, the value of this measure should be 100%. This means that this storage account is available. Any value lower than 100% is a cause for concern, as it implies reduced availability of the storage service. All unexpected errors result in reduced availability. |

||||||||||

|

File shares count |

Indicates the number of file shares in this storage account |

Number |

Use the detailed diagnosis of this measure to know which file shares are in this account. For each file share, the detailed diagnostics further reveals when that file share was last modified, the lease state and duration, the access tier and its status, the protocols enabled, the share size quota, the file share usage (in bytes), and when a snapshot of that share was taken. |

||||||||||

|

Files in the storage account |

Indicates the number of files in this storage account |

Number |

|

||||||||||

|

Snapshots present in storage account |

Indicates the number of snapshots present on the share in this storage account's Azure Files service. |

Number |

Azure Files provides the capability to take share snapshots of file shares. Share snapshots capture the share state at that point in time. |

||||||||||

|

File share capacity quota |

Indicates the upper limit on the amount of storage that can be used by this storage account's Azure Files Service. |

MB |

If the value of the Used file capacity measure is equal or close to the value of this measure, it means that the capacity quota has been / is about to be exhausted. In other words, it implies that the Azure Files service is about to run out of free storage space. To avoid this, you may either want to delete unwanted files and make room, or increase the storage capacity quota. |

||||||||||

|

File share requests |

Indicates the number of requests made to this storage account's Azure Files Service. |

Number |

|

||||||||||

|

File share ingress |

Indicates the amount of ingress data consumed by this storage account's Azure Files Service. |

MB |

A default ingress limit is set for File storage. The ingress limit refers to the maximum amount of data that File storage can receive. By comparing the value of this measure with the ingress limit, you can be forewarned if the capacity limits set for your File storage need to be increased to ensure peak storage performance. |

||||||||||

|

File share egress |

Indicates the amount of egress data consumed by this storage account's Azure Files Service. |

MB |

A default egress limit is set for File storage. The egress limit refers to the maximum amount of data that File storage can send. By comparing the value of this measure with the egress limit, you can be forewarned if the capacity limits set for File storage need to be increased to ensure peak storage performance. |

||||||||||

|

File share success server latency |

Indicates the average time taken by the File storage in this account to process requests successfully. |

Secs |

Server latency of File storage is the interval from when File storage receives the last packet of the request until the first packet of the response is returned from that storage. In simpler terms, it means the time taken by File storage to process any given request. End-to-end latency of File storage is the interval from when the File storage receives the first packet of the request until that storage receives a client acknowledgment on the last packet of the response. In simpler terms it means the round trip of any operation starting at the client application, plus the time taken for processing the request at the File storage and then coming back to the client application. If you find that end-to-end latency is significantly higher than server latency, then investigate and address the source of the additional latency. The main factor influencing end-to-end latency is operation size. It takes longer to complete larger operations, due to the amount of data being transferred over the network and processed by File storage. Client configuration factors such as concurrency and threading also affect latency. Client resources including CPU, memory, local storage, and network interfaces can also affect latency. If the server latency is abnormally high, then it could imply that the File storage is experiencing serious processing deficiencies. |

||||||||||

|

File share success end-to-end latency |

Indicates that average end-to-end latency of successful requests made to the File storage in this account. |

Secs |

|||||||||||

|

File share availability |

Indicates the percentage availability of this storage account's Azure Files Service. |

Percent |

Ideally, the value of this measure should be 100%. This means that the File storage service is available. Any value lower than 100% is a cause for concern, as it implies reduced availability of the service. All unexpected errors result in reduced availability. |

||||||||||

|

Blob containers count |

Indicates the number of containers in this storage account. |

Number |

Use the detailed diagnosis of this measure to know which blobs are in this account. For each blob, the detailed diagnostics further reveals when that file blob was last modified, the lease state and duration, the configured retention period, the version, and more. |

||||||||||

|

Blob objects stored |

Indicates the number of blob objects stored in this storage account. |

Number |

|

||||||||||

|

Azure data lake capacity |

Indicates the total of blob storage used in this storage account. |

MB |

|

||||||||||

|

Blob requests |

Indicates the number of requests made to the Blob storage in this storage account. |

Number |

|

||||||||||

|

Blog ingress |

Indicates the amount of ingress data consumed by this storage account's Blob storage. |

MB |

A default ingress limit is set for Blob storage. The ingress limit refers to the maximum amount of data that Blob storage can receive. By comparing the value of this measure with the ingress limit, you can be forewarned if the capacity limits set for your Blob storage need to be increased to ensure peak storage performance. |

||||||||||

|

Blob egress |

Indicates the amount of egress data consumed by this storage account's Blob storage. |

MB |

A default egress limit is set for Blob storage. The egress limit refers to the maximum amount of data that Blob storage can send. By comparing the value of this measure with the egress limit, you can be forewarned if the capacity limits set for Blob storage need to be increased to ensure peak storage performance. |

||||||||||

|

Blob success server latency |

Indicates the average time taken by the Blob storage in this account to process requests successfully. |

Secs |

Server latency of Blob storage is the interval from when Blob storage receives the last packet of the request until the first packet of the response is returned from that storage. In simpler terms, it means the time taken by Blob storage to process any given request. End-to-end latency of Blob storage is the interval from when the Blob storage receives the first packet of the request until that storage receives a client acknowledgment on the last packet of the response. In simpler terms it means the round trip of any operation starting at the client application, plus the time taken for processing the request at the Blob storage and then coming back to the client application. If you find that end-to-end latency is significantly higher than server latency, then investigate and address the source of the additional latency. The main factor influencing end-to-end latency is operation size. It takes longer to complete larger operations, due to the amount of data being transferred over the network and processed by Blob storage. Client configuration factors such as concurrency and threading also affect latency. Client resources including CPU, memory, local storage, and network interfaces can also affect latency. If the server latency is abnormally high, then it could imply that the Blob storage is experiencing serious processing deficiencies. |

||||||||||

|

Blob success end-to-end latency |

Indicates that average end-to-end latency of successful requests made to the File storage in this account. |

Secs |

|||||||||||

|

Blob containers availability |

Indicates the percentage availability of Blob storage containers in this storage account. |

Percent |

Ideally, the value of this measure should be 100%. This means that the Blob storage is available. Any value lower than 100% is a cause for concern, as it implies reduced availability of the storage. All unexpected errors result in reduced availability. |

||||||||||

|

Table shares count |

Indicates the number of tables in this storage account. |

Number |

|

||||||||||

|

Tables entity count |

Indicates the number of table entities in this storage account. |

Number |

An entity is a set of properties, similar to a database row. A property is a name-value pair. Each entity can include up to 252 properties to store data. |

||||||||||

|

Table requests |

Indicates the number of requests to the Table storage in this storage account. |

Number |

|

||||||||||

|

Table ingress |

Indicates the amount of ingress data consumed by this storage account's Table storage. |

MB |

A default ingress limit is set for Table storage. The ingress limit refers to the maximum amount of data that Table storage can receive. By comparing the value of this measure with the ingress limit, you can be forewarned if the capacity limits set for your Table storage need to be increased to ensure peak storage performance. |

||||||||||

|

Table egress |

Indicates the amount of egress data consumed by this storage account's Table storage. |

MB |

A default egress limit is set for Table storage. The egress limit refers to the maximum amount of data that Table storage can send. By comparing the value of this measure with the egress limit, you can be forewarned if the capacity limits set for Table storage need to be increased to ensure peak storage performance. |

||||||||||

|

Table success server latency |

Indicates the average time taken by the Table storage in this account to process requests successfully. |

Secs |

Server latency of Table storage is the interval from when Table storage receives the last packet of the request until the first packet of the response is returned from that storage. In simpler terms, it means the time taken by Table storage to process any given request. End-to-end latency of Table storage is the interval from when the Table storage receives the first packet of the request until that storage receives a client acknowledgment on the last packet of the response. In simpler terms it means the round trip of any operation starting at the client application, plus the time taken for processing the request at the Table storage and then coming back to the client application. If you find that end-to-end latency is significantly higher than server latency, then investigate and address the source of the additional latency. The main factor influencing end-to-end latency is operation size. It takes longer to complete larger operations, due to the amount of data being transferred over the network and processed by Table storage. Client configuration factors such as concurrency and threading also affect latency. Client resources including CPU, memory, local storage, and network interfaces can also affect latency. If the server latency is abnormally high, then it could imply that the Table storage is experiencing serious processing deficiencies. |

||||||||||

|

Table success end-to-end latency |

Indicates that average end-to-end latency of successful requests made to the File storage in this account. |

Secs |

|||||||||||

|

Table availability |

Indicates the percentage availability of this storage account's Table storage. |

Percent |

Ideally, the value of this measure should be 100%. This means that the Table storage service is available. Any value lower than 100% is a cause for concern, as it implies reduced availability of the service. All unexpected errors result in reduced availability. |

||||||||||

|

Queue shares count |

Indicates the number of queues in this storage account. |

Number |

Use the detailed diagnosis of this measure to know which queues are in this account. |

||||||||||

|

Queue messages count |

Indicates the number of unexpired queue messages in this storage account. |

Number |

|

||||||||||

|

Queue requests |

Indicates the number of requests to the Queue storage in this storage account. |

Number |

|

||||||||||

|

Queue ingress |

Indicates the amount of ingress data consumed by this storage account's Queue storage. |

MB |

A default ingress limit is set for Queue storage. The ingress limit refers to the maximum amount of data that Queue storage can receive. By comparing the value of this measure with the ingress limit, you can be forewarned if the capacity limits set for your Queue storage need to be increased to ensure peak storage performance. |

||||||||||

|

Queue egress |

Indicates the amount of egress data consumed by this storage account's Queue storage. |

MB |

A default egress limit is set for Queue storage. The egress limit refers to the maximum amount of data that Queue storage can send. By comparing the value of this measure with the egress limit, you can be forewarned if the capacity limits set for Queue storage need to be increased to ensure peak storage performance. |

||||||||||

|

Queue success server latency |

Indicates the average time taken by the Queue storage in this account to process requests successfully. |

Secs |

Server latency of Queue storage is the interval from when Queue storage receives the last packet of the request until the first packet of the response is returned from that storage. In simpler terms, it means the time taken by Queue storage to process any given request. End-to-end latency of Queue storage is the interval from when the Queue storage receives the first packet of the request until that storage receives a client acknowledgment on the last packet of the response. In simpler terms it means the round trip of any operation starting at the client application, plus the time taken for processing the request at the Queue storage and then coming back to the client application. If you find that end-to-end latency is significantly higher than server latency, then investigate and address the source of the additional latency. The main factor influencing end-to-end latency is operation size. It takes longer to complete larger operations, due to the amount of data being transferred over the network and processed by Queue storage. Client configuration factors such as concurrency and threading also affect latency. Client resources including CPU, memory, local storage, and network interfaces can also affect latency. If the server latency is abnormally high, then it could imply that the Queue storage is experiencing serious processing deficiencies. |

||||||||||

|

Queue success end-to-end latency |

Indicates that average end-to-end latency of successful requests made to the Queue storage in this account. |

Secs |

|||||||||||

|

Queue availability |

Indicates the percentage availability of this storage account's Queue storage. |

Percent |

Ideally, the value of this measure should be 100%. This means that the Queue storage service is available. Any value lower than 100% is a cause for concern, as it implies reduced availability of the service. All unexpected errors result in reduced availability. |

||||||||||

|

Amount of storage used by snapshots |

Indicates the amount of storage space in this storage account that is used by snapshots. |

MB |

If the Storage account used capacity measure reports an unusually high value for an account, then compare the value of this measure with that of the Used file capacity, Used table capacity, Used queue capacity , and the Used blob capacitymeasures to accurately identify the type of storage that is responsible for the abnormal usage - is it file storage? blob storage? table storage? queue storage? or snapshots? |

||||||||||

|

Total storage capacity |

Indicates the total amount of storage space allocated to this account. |

GB |

|

||||||||||

|

Available storage capacity |

Indicates the amount of storage space in this account that is available for use. |

GB |

|

||||||||||

|

Storage capacity utilization |

Indicates the percentage of storage space that is utilized in this account. |

Percent |

A value close to 100 percent indicates that the storage account is running out of storage space. Compare the value of this measure across storage accounts to identify the storage account that is running out of storage space. |

||||||||||

|

Request rate |

Indicates the rate at which the read/write requests are processed in storage space. |

Requests/sec |

|

||||||||||

|

Ingress rate |

Indicates the rate at which data is written into storage space. |

MB/sec |

Ideally the value of these measures should be high for an optimal read/write performance.

|

||||||||||

|

Egress rate |

Indicates the rate at which data is read from storage space. |

MB/sec |

|||||||||||

|

IOPS utilization |

Indicates the percentage of input/output operations executed in storage space. |

Percent |

A very high value os this measure is required to avoid throttling issues. |

Use the detailed diagnosis of the Provisioning state measure to know the location of the storage, the storage type, when it was created, and more.

Figure 3 : The detailed diagnosis of the Provisioning state measure reported by the Azure Storage Details test