Talk about performance monitoring to any system admin or IT manager and one of the first questions they will ask is whether you are using agent or agentless monitoring. The moment you hear that agent vs agentless monitoring question, you know that they are interested in an agentless monitoring solution. This is usually driven by the fear of having agents on critical servers in the infrastructure!

Talk about performance monitoring to any system admin or IT manager and one of the first questions they will ask is whether you are using agent or agentless monitoring. The moment you hear that agent vs agentless monitoring question, you know that they are interested in an agentless monitoring solution. This is usually driven by the fear of having agents on critical servers in the infrastructure!

- In this article, we will discuss:

- How agentless monitoring evolved

- Why complete agentless monitoring may not be feasible anymore

- Why IT teams must rely on a combination of agent and agentless monitoring techniques

Truth be told, we don’t think that the discussion should be around agent vs agentless monitoring because there are pros and cons to each. In fact, as we’ll discuss in this article, a combination approach might be the best option.

The Advent of Agentless Monitoring |

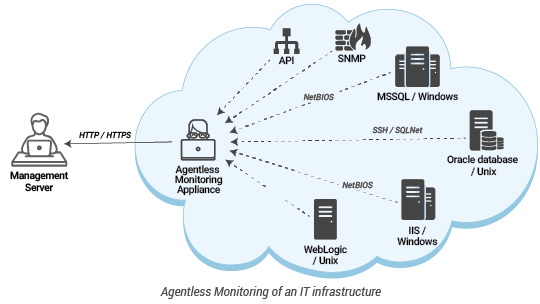

Monitoring of network devices has always been agentless – using SNMP, packet sniffers, flow analyzers, etc. Until the late 90s, almost all performance monitoring of servers and applications was agent-based. One of the first agentless monitoring solutions was Mercury SiteScope – now Micro Focus SiteScope. Since then several agentless monitoring solutions have emerged.

Agentless monitoring is attractive because:

-

- Agents used to be heavyweight: Monitoring in the 90s was dominated by the Big 4 vendors – HP, BMC, CA, and IBM. During those times, monitoring agents used to be heavyweight, taking up a lot of CPU and memory resources on the servers they ran on. Furthermore, when an agent malfunctioned, it affected the performance of the server and the applications it monitored.

- Agentless monitoring is easier to maintain: Deployment and maintenance of agents was also complicated then. Agentless monitoring allowed administrators to monitor servers and applications without installing any software on the servers being monitored. Protocols and APIs like SSH, WMI, and others were used to collect the same metrics that agents would collect. Agentless monitoring is often performed using a dedicated appliance in the network. Only one device – the appliance performing agentless monitoring – had to be managed. Deployment of upgrades and devices was simple as well – only the appliance that performs the monitoring has to be upgraded.

Complete Agentless Monitoring is No Longer Feasible |

In the last two decades, IT infrastructures have changed significantly and agentless monitoring in its earlier form is no longer feasible for several reasons:

Increased focus on security limits the applicability of agentless monitoring: Firstly, these days, IT environments are constantly being scrutinized for how they handle security. Agentless monitoring requires remote access to the servers and applications being monitored from one location. Communication from the agentless monitoring appliance to the target servers and applications is possible only if a number of TCP ports are enabled. This is often a challenge particularly in security conscious environments. Also, the agentless monitoring appliance is a single point of vulnerability – if this appliance is compromised, a hacker could access all the servers and applications being monitored.

Increased focus on security limits the applicability of agentless monitoring: Firstly, these days, IT environments are constantly being scrutinized for how they handle security. Agentless monitoring requires remote access to the servers and applications being monitored from one location. Communication from the agentless monitoring appliance to the target servers and applications is possible only if a number of TCP ports are enabled. This is often a challenge particularly in security conscious environments. Also, the agentless monitoring appliance is a single point of vulnerability – if this appliance is compromised, a hacker could access all the servers and applications being monitored. Increased security in modern operating systems: Every new version of an operating system has additional security. This means that deploying just an agentless monitoring appliance is no longer sufficient. Therefore, configuration of agentless monitoring is not a breeze anymore. Additional configurations must be performed on all the monitored servers to enable the agentless monitoring appliance to connect to them.

Increased security in modern operating systems: Every new version of an operating system has additional security. This means that deploying just an agentless monitoring appliance is no longer sufficient. Therefore, configuration of agentless monitoring is not a breeze anymore. Additional configurations must be performed on all the monitored servers to enable the agentless monitoring appliance to connect to them. Agentless monitoring does not have zero overhead: It is now well understood that agentless monitoring also has overheads. Most often, the same interfaces/APIs/commands used by an agent are also used for agentless monitoring. Therefore, the data collection overhead of agentless monitoring is not negligible. At the same time, with agentless monitoring, the raw data from monitoring is transmitted from the server being monitored to the agentless monitoring appliance. This can result in significant bandwidth overhead when using an agentless monitoring approach. Consider the example of a log file being monitored. An agent installed on a server can track the new additions to the log easily whereas an agentless monitoring appliance will need to get the entire log each time and review the recent changes in it. This results in a significant network overhead.

Agentless monitoring does not have zero overhead: It is now well understood that agentless monitoring also has overheads. Most often, the same interfaces/APIs/commands used by an agent are also used for agentless monitoring. Therefore, the data collection overhead of agentless monitoring is not negligible. At the same time, with agentless monitoring, the raw data from monitoring is transmitted from the server being monitored to the agentless monitoring appliance. This can result in significant bandwidth overhead when using an agentless monitoring approach. Consider the example of a log file being monitored. An agent installed on a server can track the new additions to the log easily whereas an agentless monitoring appliance will need to get the entire log each time and review the recent changes in it. This results in a significant network overhead. Challenges in troubleshooting problems due to agentless monitoring: With an agent-based monitoring approach, it is possible to quantify the overhead of monitoring: one can track the resources used by the agent and any executables it spawns. With an agentless monitoring approach, as there is no single process that performs monitoring on a server, it is not straightforward to quantify the monitoring overhead. Hence, troubleshooting of any performance issues caused by the monitoring system is harder to track.

Challenges in troubleshooting problems due to agentless monitoring: With an agent-based monitoring approach, it is possible to quantify the overhead of monitoring: one can track the resources used by the agent and any executables it spawns. With an agentless monitoring approach, as there is no single process that performs monitoring on a server, it is not straightforward to quantify the monitoring overhead. Hence, troubleshooting of any performance issues caused by the monitoring system is harder to track. Depth of monitoring is severely reduced with agentless monitoring: Agents running on a server, or one integrated with an application, can collect a lot of metrics. For instance, it is possible to snoop on all requests to an application using an agent installed on the application’s server. Agentless monitoring cannot support this capability. Hence, the depth of monitoring is severely reduced in many cases when an agentless monitoring approach is used. Also, many operating systems and applications do not expose many remote APIs. This also makes agentless monitoring difficult.

Depth of monitoring is severely reduced with agentless monitoring: Agents running on a server, or one integrated with an application, can collect a lot of metrics. For instance, it is possible to snoop on all requests to an application using an agent installed on the application’s server. Agentless monitoring cannot support this capability. Hence, the depth of monitoring is severely reduced in many cases when an agentless monitoring approach is used. Also, many operating systems and applications do not expose many remote APIs. This also makes agentless monitoring difficult.

Because of all the above reasons, it is no longer feasible to have a monitoring system that is 100% agentless.

When is Agentless Monitoring Preferred? |

The above arguments are made only to highlight the challenges in adopting agentless monitoring. It does not mean that all monitoring must be agent-based. There are several scenarios where agentless monitoring is still required:

- Monitoring of network devices (routers, switches, firewalls, etc.) must be predominantly agentless – using SNMP polling, SNMP traps, flow data, etc.

- Monitoring of storage platforms: It is not possible to install agents on storage devices. Command line interfaces, SNMP, and SMI-S are some of the ways in which storage platforms can be monitored in an agentless manner.

- Monitoring of advanced networking platforms: Devices like Citrix NetScaler, F5 BigIP, etc., perform a wide range of functions. These devices act as network accelerators, VPN concentrators, load balancers, protocol proxies, etc. These devices run as appliances and administrators will not be able to install agents on them. Hence, an agentless monitoring approach is preferred.

- Monitoring of virtualization platforms (VMware vSphere, Citrix Hypervisor, Nutanix Acropolis, etc.) is also agentless. Most virtualization vendors do not recommend installing agents on their server platforms. REST/Web service APIs provide a great degree of detail about the performance of the hypervisors and VMs running on them.

- Monitoring of cloud platforms (AWS, Azure, Google Cloud, Alibaba Cloud, etc.) must be agentless. Customers do not have direct access to the cloud infrastructure. So, agent-based monitoring is not an option. APIs such as CloudWatch are used to monitor the cloud platforms.

- Monitoring of SaaS applications (Salesforce, Microsoft 365, etc.) also must be agentless. APIs from the respective SaaS platform vendors must be used.

- Monitoring of real user experience for web applications: Real User Monitoring for web applications is based on Javascript injection techniques. This is done in an agentless manner: the Javascript executes on client browsers, performance metrics are sent to a RUM collector, which then aggregates metrics and reports performance data to the management server.

A Combination of Agent and Agentless Monitoring is Required |

Legacy operating systems, such as Windows and Unix, do not support the type of APIs that new-age technologies like virtualization, containers, and cloud platforms have. Hence, an agent-based approach is preferred to monitor Windows and Unix platforms and applications running on them. An agent running on a system offers other benefits as well. For instance, remote actions on a server are more effectively performed using an agent on the system. Accurate auto-discovery of applications and application instances running on a server is only possible using an agent on the system (the agent can access application configuration files, registry entries, etc., locally).

Legacy operating systems, such as Windows and Unix, do not support the type of APIs that new-age technologies like virtualization, containers, and cloud platforms have. Hence, an agent-based approach is preferred to monitor Windows and Unix platforms and applications running on them. An agent running on a system offers other benefits as well. For instance, remote actions on a server are more effectively performed using an agent on the system. Accurate auto-discovery of applications and application instances running on a server is only possible using an agent on the system (the agent can access application configuration files, registry entries, etc., locally).

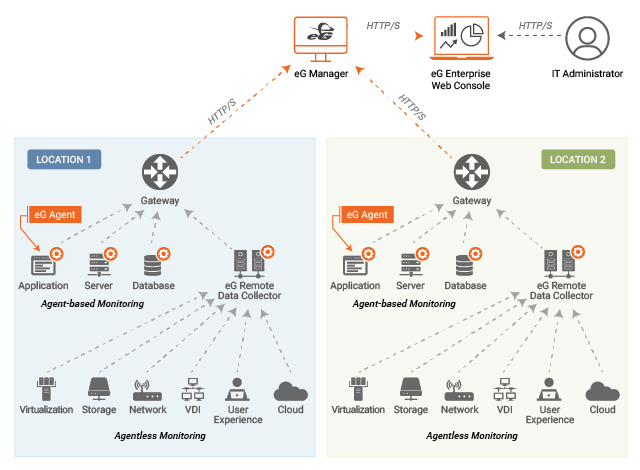

The above discussion highlights why eG Enterprise relies on a combination of agent and agentless monitoring. To overcome the typical challenges faced by administrators in deploying agents, eG Enterprise includes several capabilities:

- eG Enterprise agents are auto-upgradable from the management console. Administrators do not have to spend time manually upgrading agents.

- It uses a universal monitoring architecture. One agent on a server monitors all the applications running on it. The same agent can be deployed on all servers in the infrastructure. Therefore, administrators do not have to worry about deploying one management pack on one server and another on a second server.

- In the case of cloud-hosted monitoring, for organizations that need the monitoring data to be transmitted out of their network through a single point, the agents can be configured to communicate through a web proxy server.

- The agents can be controlled remotely from the management server itself.

- The agents perform auto-discovery. So, the target infrastructure can be managed automatically with minimal manual intervention.

Concluding Remarks

In conclusion, it is not about agent vs. agentless monitoring anymore. Organizations must judiciously use a combination of agent and agentless monitoring, focusing on what to monitor and how to analyze the metrics collected, rather than worrying about whether they will use agent-based or agentless monitoring.

eG Enterprise is an Observability solution for Modern IT. Monitor digital workspaces,

web applications, SaaS services, cloud and containers from a single pane of glass.